Over the past 6 months of working with a particular client, we’ve generated $470k in quarterly incremental revenue through split-testing (that’s $1.68 million in additional revenue created). Not bad!

Now, the more interesting part is that we’ve won only 51% of all the split-tests that we’ve run for them.

Huh?

How could we create over $1.8 million in revenue and lose half the split-tests that we ran?

Well, buckle up because we’re going to prove why it’s mathematically better for you to lose (or tie) a large portion of your split-tests.

Split-Testing Background

Let’s make sure we’re all on the same page with split-testing.

We’re gonna keep this brief (this would be a whole discussion in and of itself).

If you want to read further about it, click here.

The mechanism that we perform Conversion Rate Optimization (or as we like to say, Conversion Revenue Optimization) with is split-testing.

You don’t have to split-test to increase conversion rate, but if you like to make decisions based on data and not feelings, you kinda-absolutely-100% have to.

Here’s an example of a before and after of a split test

If you are just making changes to your site and “seeing what happens” to the conversion rate, it’s an extremely unscientific way to detect an impact.

What if you sent great traffic one week and bad traffic the next? What if your stock changed? What if buyer behavior changed through no effort of your own?

As marketers, we want to answer the question: which version of my landing page/sales page/checkout/menu/etc. makes more revenue (or converts more sales, submits a contact form more, etc)?

The most efficient and accurate way to do that is to show a portion of traffic version 1, the other portion of traffic version 2, track how much revenue each brings in, then go with the winner.

Like anything, it gets a lot more complex than that, but the crux of it is just answering that big question.

When you split-test, you get results that look like this, depending on your split-testing software (RIP Google Optimize and Universal Analytics):

You see:

The amount sessions for each variant

The revenue for each variant

The conversion rate

The average order value

The earrings per session (aka revenue per session)

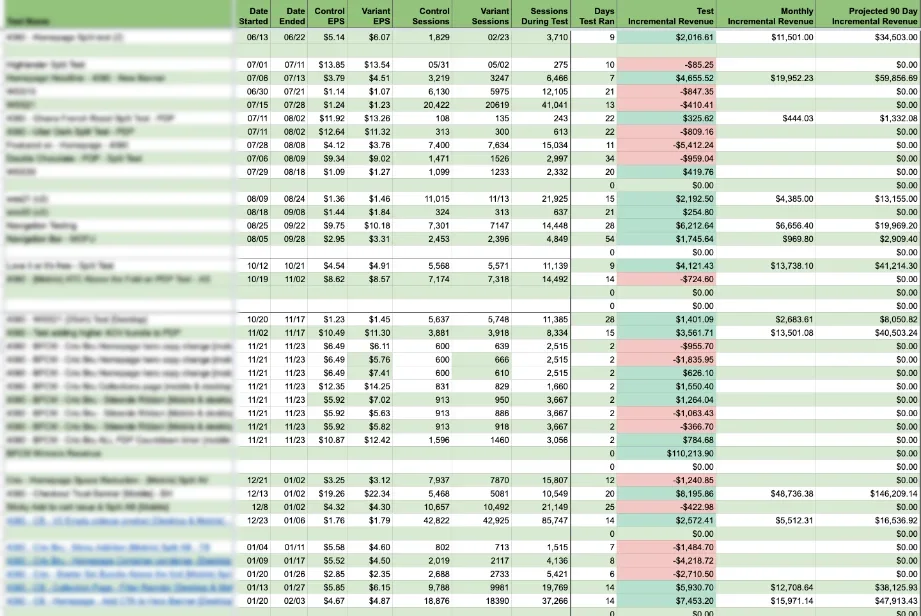

With our clients, we use a Google Sheet to keep track of all split-tests and their associated incremental revenue.

Which brings us to…

Incremental Revenue

With split-testing, we are ultimately trying to increase incremental revenue.

Incremental Revenue (or IR for short) is essentially just the difference between your winning variant and your control.

It answers the question: how much more revenue are we making/will we make due to this improved version?

Below, you can see our spreadsheet again for an example

Based on the test length, we calculate how much incremental revenue the winning variant will provide on a monthly and quarterly basis.

This is really what you’re looking for when split-testing.

Losses vs. Wins

For some shock value, let’s take a look at a sample Incremental Revenue sheet from the client in question:

Lots of red, right? Don’t be scared, I got you.

There is an asymmetric value to losses and wins – one that stacks the deck very much in our favor.

In one sentence: losses are mitigated, wins are perpetual.

In more detail:

When we set a split-test live and our variant is losing, we kill it.

We have “lost” that revenue, because if we just kept the control, we would have made more sales.

But, as soon as we kill the experiment, we stop losing that revenue immediately.

On the flipside, when we have a split-test that wins, that becomes the new control, and the increase in incremental revenue persists for as long as that variant stays active (until we find a new winner 🙂 )

Let’s look at an example from a client of ours.

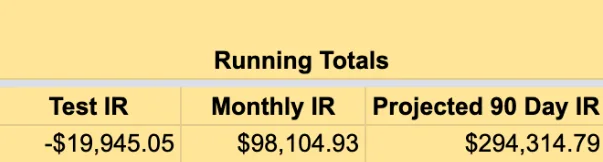

If you add up the test incremental revenue, we lost $1632 on these tests.

But calling this set of tests a failure is a big mistake.

Looking at it over the course of just the testing periods, we lose money. Let’s look at what happens over 90 days.

Voila! That one winning test will produce $21k over the next 90 days.

All in all, we net $19.5k from these tests over a 90 day period. That’s a hell of a nice return.

Let’s look at it from a different angle.

In this specific case, 4 tests accounted for a roughly $5k loss.

Our win accounted for a $21k win.

That means that one winning test is financially equal to SIXTEEN losing tests. Of course, that’s in this specific case with these specific results. But suffice it to say that we see this pattern repeat over and over with clients.

Remember… losers lose for 7 days, winners win for months. And in reality, they can produce revenue in perpetuity, but we typically work in 90 day cycles for judging performance. This is a conservative estimate.

By this point, your ears should be perking up, because this type of asymmetric risk/reward is almost unheard of.

It Gets Even Better

In our previous examples, we see that finding a winner pays our losses and nets us some nice incremental revenue going forward.

That’s actually a simplified case though. We’re assuming that we are being random in our testing and learning nothing from each test, which, if you are properly split-testing is obviously not the case.

First, before running a test we don’t know if it will win or lose (if we did, we’d just implement it straightway).

Second, not all losing tests are created equal. Within the umbrella of data that is incremental revenue, there are other data points that provide more clarity as to why we lost.

The only real way to “lose” in CRO is if you fail to learn from your data.

Questions like:

- Did we lose because of AOV or CVR?

- Did we lose with new vs. returning traffic?

- Did we lose on mobile or desktop?

Provide clarity as to why a test was lost. Sometimes, you just lose all around. But often, you’ll find that your test actually won with certain segments, even though it lost overall.

You’ll see that it actually won with [mobile returning] traffic.

So, we’ve actually still created incremental revenue. Maybe we just didn’t put enough effort into the desktop version. Maybe desktop users need to see something different.

Whatever the case is, we take it, learn from it, and continue to iterate. We can also implement it as a personalization to show for users that meet a certain criteria.

Losses Are like Fixed Costs

Let’s imagine you presented an offer to an astute investor.

Your offer has the following conditions:

Invest $20k over a period of 3 months

In return, you’ll receive $294k per quarter in perpetuity, guaranteed.

Assuming it was legit, legal and everything checked-out, every investor would be begging to invest.

I mean, you are 14x’ing your initial investment every single quarter.

If you look below, that’s exactly what happened with this client of ours.

Really, this is what split-testing is all about. You should see them as investments into future incremental revenue.

Lose a bit of revenue today for a lot of revenue tomorrow.

The crazy part is that in a lot of cases, your test incremental revenue can be positive. Meaning, you get paid to generate incremental revenue. Now that is very cool. Here’s an example of that:

Obviously there are costs that go into testing (design, developers, etc.). But for most brands starting out, the internal marketing team can perform quite a few tests before needing outside resources.

Realistically, incremental revenue is difficult to predict beyond a quarter as well. Traffic volume changes, other elements on the site change, etc.

Regardless, your 90 day Incremental Revenue is a fantastic way to measure your success.

Conclusion

Hopefully we’ve provided an argument that makes you excited for finding losers from your split-tests.

Remember:

- One winning test is worth 3x-10x losers (at a minimum)

- Losers are a step toward winners

- Not all losing tests are created equal

- See split-tests as an investment into incremental revenue

Happy testing!

TLDR; You have a mathematical obligation to be more aggressive in your split-testing. Aim to win about 50% of your tests